|

Yujin Sung

Hi!😀 I’m a M.S. Student at Korea University, Vision & AI lab (Advisor: Prof. Jungbeom Lee & Jinkyu Kim). I earned a Bachelor’s degree in Computer Science from Korea University.

|

|

Research

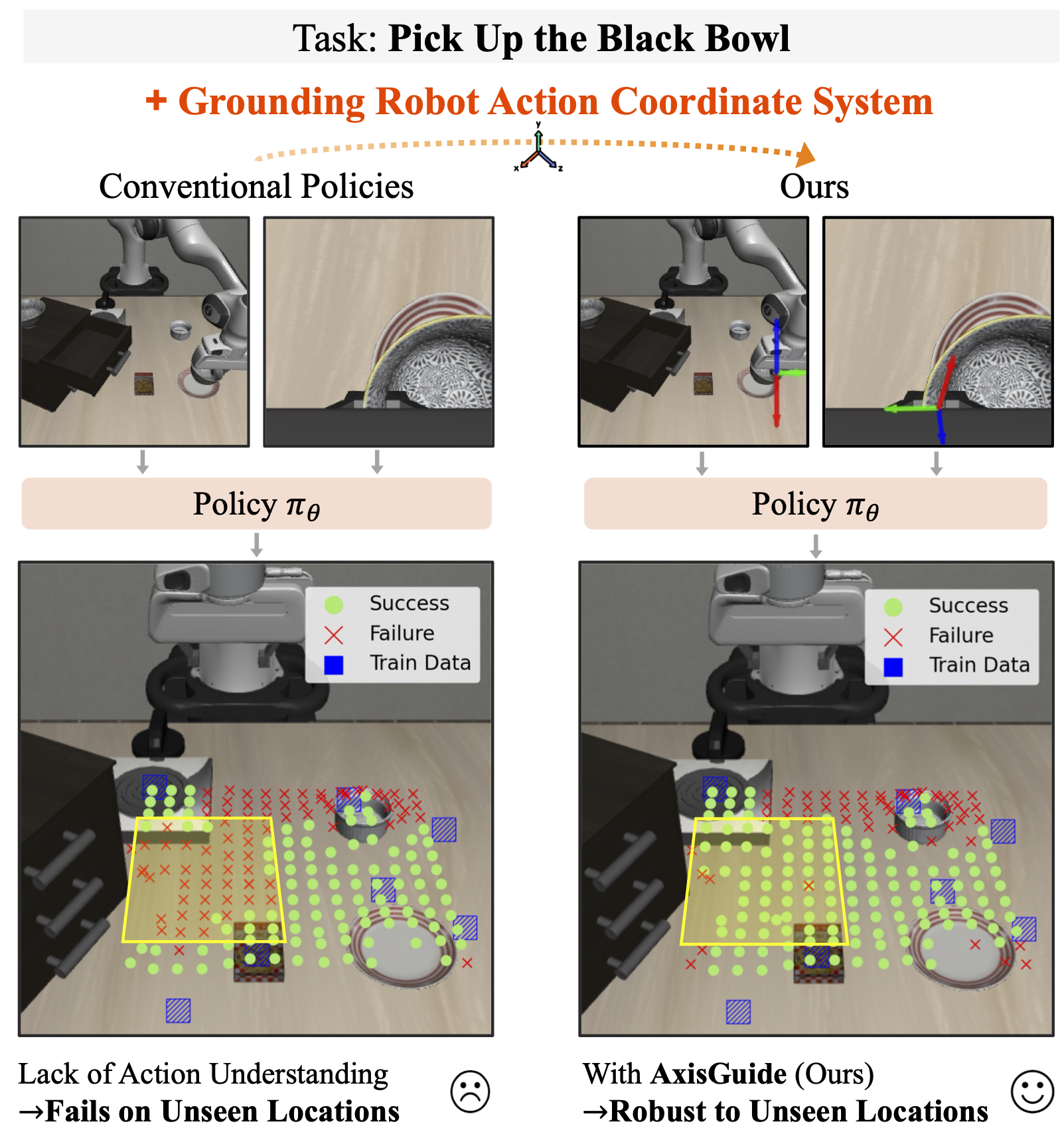

RSS, 2026

project

A framework that injects action coordinate cues into RGB observations to explicitly ground the robot’s action space, improving zero-shot execution and robustness in visuomotor manipulation across diverse environments.

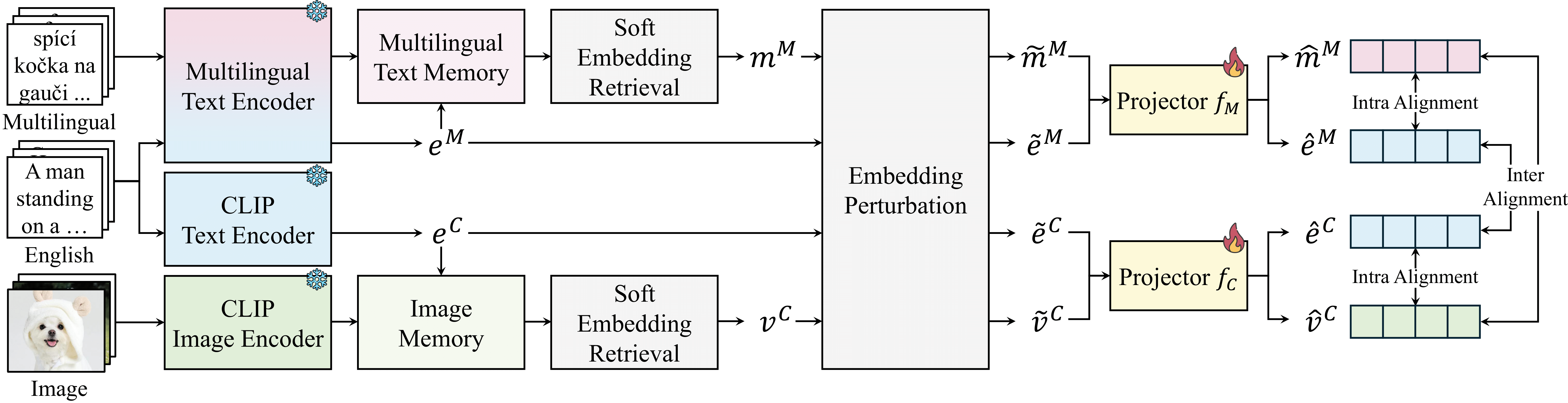

AAAI, 2026

project / paper / code

Lightweight framework that enables multilingual vision–language alignment for underrepresented languages by using English as a semantic pivot and requiring no paired supervision.

Website template from Jon Barron.